Accueil I #Actualités I

Background

The unintended consequences of this amendment could have significant impact on Europeans, raing the value of their content from illegal sharing. Despite being the victims and having provided all the information required by the DSA to have these illegal contents removed expeditiously, they would have no certainty that online services will actually execute the notice upon receiving it. nging from victims of cyberbullying, who face illegal hate speech online, to SMEs who risk los

Explanation/issue

Our non-profit organization focusing on cyber bullying and cyber hate does combat illicit and harmful content online in order to build a Respect by Design and safe environment online and we trust that the DSA can be a key vehicle to reach this goal.

We observe that current wording constitutes step back vis a vis the wording of the existing E Commerce Directive when it comes to online liability and diligently fight against illegal contents.

Proposed Article 14 (3a) does contradict 2 key DSA principles:

– the existing legal obligations of hosting services

– characterizing illegal content which cannot remain online after having been properly notified to the online service

The immediate effect of the proposed wording will enable illicit contents to remain online for longer period of time, as negligent or non-diligent online services can now use Article 14(3a) to be exempted from any loss of liability despite their failure to remove expeditiously illegal content post adequate reporting to them. This is not acceptable and is against the fundamentals of the DSA and the protection of EU citizens against the spread of illicit contents online.

Request

This is why we respectfully ask you to delete Article 14(3a) in order to ensure that platforms remain accountable re illicit and harmful contents.

Exhibit

About Respect Zone and the DSA in general:

Respect Zone, is an European NGO created since 2014 in France, with antennas in various EU territories and office Brussels, aiming to deter and tackle cyberviolence, is well-aware and involved in preventing the multiple harmful experiences digital services can cause to children and teenagers. The NGO is working closely with schools on this matter. Although the responsibility lies not only with digital services, Respect Zone offers several tools and solutions so that they can all their part in this endeavor. For instance, Respect Zone promotes over children protection online the following measures amid the 50 proposals of the NGO for a safer internet:

- A COMPREHENSIVE “RESPECT BY DESIGN” APPROACH: From coding to interface, platforms should make of content flagging and easiness to contact moderators a prime priority and should rely on how the platforms’ ergonomic can deter by itself most harmful behaviors and/or speeches by utilizing the nudge theory contributions. 2. CHILD-FRIENDLY INFORMATION: Children and teenagers are the most at risk with illegal contents. They are also those who might play the most with the rules by trying to test, to bend them. Online or offline, authority cannot be forced upon them but accepted, understood. Thus, platforms should inform on their conditions of services with wording and media adapted to each category of age.

- CHILD-FRIENDLY TUTORIALS: Beside their duty to prevent and fight against illegal contents harbored on their spaces, platforms must be bound to constantly inform their minors users. Platforms should offer clear and adapted contents raising awareness on the risk of cyberviolences, the effects it may have on the well-being and the resources existing at the benefits of the users on the platform as well as off the platform. 4. A EUROPEAN CERTIFICATION CHARTER: Respect Zone labels digital spaces’ owners and/or editors respecting its charter on moderation and respect online. In a broader effort, a Charter (i.e. a soft-law instrument) could be adopted and offer to help platforms complying with its provisions continuing the measures developed above.

We have seen on several occasions flagged that online platforms can be manipulated, the influence on elections is an example. The Cambridge Analytica case is an example among many. Today, online platforms have not yet reached a level of trust regarding their internal practices to guarantee non-discrimination, justice, solidarity, democracy and gender equality. The 5th evaluation of the Code of Conduct of platforms by the European Commission shows that the removal rate of illegal content by Twitter is lower than in 2019. This is confirmed by a test carried out by four French associations during the lockdown which showed that out of 1100 clearly illegal tweets only 12% had been removed. Furthermore, the publication of the results of the Facebook’s Civil Rights Audit – Final Report shows that the network is a real « echo chamber” for extremism. Indeed, “the algorithms used by Facebook inadvertently fuel extreme and polarizing content”. The report indicates that the ““Related Pages” feature, “which suggests other pages a person might be interested in, could push users who engage with white supremacist content toward further white supremacist content”. Other social networks, such as Snapchat or Telegram, are places where intimate photos are shared without people’s consent, and these platforms do not react enough (or fast enough) to remove the content or eliminate the groups that share it. This was massively observed during the lockdown in France with the « Fisha » groups, groups of teenagers who shared sexual pictures of young girls without their consent; or the threats of death and rape perpetrated by users of the Curious Cat network against girls. However, regarding transparency on twitter, it tooks a step forward in August 2020 as it now reports all state controlled media accounts. We will see if this will be effective in ensuring the integrity of democracy against any kind of state influence. To conclude, our NGO, Respect Zone, considers that for the moment, in view of these elements, which are not exhaustive because the shortcomings of the platforms are multiple and are spreading all over the world daily, internal practices of online platforms are not sufficiently effective.

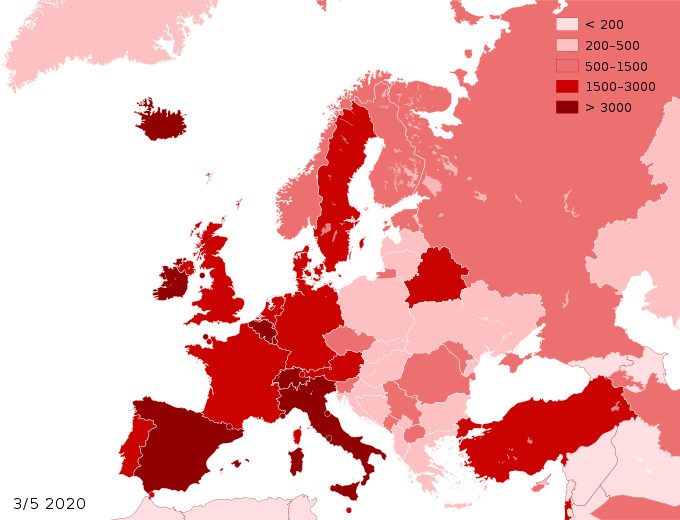

Even though the place is not suited for such discussion, it must be noted that the COVID-19 pandemic crisis has revealed all the limits of the current methods of moderation and content control on the major platforms. This observation is alarming and should not be dismissed by the heated arguments of one side or another. It has been shown that in time of crises, when normalcy is suddenly suspended, hate and disinformation are not regulated anymore. This observation is beyond the sole platforms’ responsibility: it is a warning to democracies. The application of anti hate and anti-disinformation is particularly essential and critical in such times that are conducive to civil-unrest. A collective response must be brought. To tackle the rising of hate during this late period, Respect Zone has both broadcasted a specific video to sensitive the public on the risk of disinformation online and partnered with other NGOs representing the interests of demographics among the population specifically targeted by the late wave of hate. Respect Zone has initiated a partnership with AJCF (The Young Chinese of France Association) to build and carry out training and counter-speech against anti-Asian sentiments, same goes with the partnership made between Respect Zone and French ministry of Sport to help the Government to develop safety standards in the sport sector. watch the video : https://www.youtube.com/watch?v=ZlytJLxqphM

Online Platforms are key elements to fight online hate speech and it is necessary to clearly clarify their responsibilities. Online safety EU measures should also apply to private messaging platforms. The public or private aspects of the platform should not be differentiated as cyber violence often happens in private messaging systems. Promote and disseminate the Respect Zone Charter. A unique Respect Button is advocated by Respect Zone as developed further in this response. We find it interesting as benchmark the labelling system that has over time been developed at the EU level in the videogame sector (PEGI norms) now implemented as part of standardized systems recognized by the users.

Reappearance is here understood as designating the posting of contents identical in their whole or in certain notable and obvious aspects with precedent ones sanctioned by Court as illegal. In such cases, platforms should be authorized to suppress the contents. When the context appears as unclear (reappearance for informative purposes, irony or humor, risk of false-negatives) a due diligence must be respected by the platforms. They can be helped by mediators. In order to guarantee information to the platforms, enabling them to carry out their duties, Eurojust could coordinate and host a European Judicial Cyber-delinquency Register from which platforms should be duty- 24 bound to consult. The Eurojust Agency is already shortlisted since 2015 to oversight a European Judicial Anti-Terrorism Register.

The risks of using automated instruments are well known. Any answer to this question must therefore respect a balance between the prevention of over-censorship and the need to effectively regulate flows through digital services. Respect Zone is an NGO specialized in the fight against cyber violence. The NGO has among its first resources the expertise of lawyers for many specialists in intellectual property. These professionals are already experienced in the necessary adaptation of regulatory systems and techniques to digital transformation. Also, on the basis of their recommendations, Respect Zone recommends that the use of automation be regulated by distinguishing between content protected by intellectual property and content without licenses. 1. For licensed content protected by intellectual property regimes, platforms should be allowed to regulate flows by automatic instruments from detection to deletion. 2. For unlicensed content, the use of automatic systems must be limited to detection (except in cases of « reappearance » – see question 2.5.). Deletion must be decided by a human moderator. The decision must be motivated, transmitted to the user and must be verifiable within an internal procedure in the event of failure to comply with the conditions of service. In the case of a violation of the law, the deletion can only be decided by the judge. The platforms must in this case suspend the removal but are obliged to limit the visibility and communicability of the post. A banner must also inform third party users that the content consulted is subject to a procedure. Furthermore, so that the public authority does not lose control over the modalities of access to the digital public space and can effectively prevent the risks of over censorship by algorithms or any other existing or future technologies (including AI), the platforms must be required to communicate and give access to the instruments used in terms of moderation.

As an EU NGO, Respect Zone is particularly active in the fight against content that is not obviously illegal. While manifestly illegal content can be identified by platforms, notably through the use of algorithms, that specialized associations monitor it and that procedures for its removal are clearly defined, this is not the case for content that is not manifestly illegal. Platforms do not have the tools to identify it, especially since this content is often sent to personal accounts by unidentified groups, and the victims are often fragile individuals or minors. The victims of such content often have no appropriate means of reaction and the harm caused to them is often serious or even irremediable. Moreover, recourse to specialized counsels often entails excessive costs for the victims and children cannot have access to it if they do not want to discuss the attacks against them with their parents, the main difficulty lies in the fact that, in the absence of manifest illegality, the platforms do not assume legal responsibility and cannot put in place tools that infringe freedom of expression. Respect Zone considers however that if the platforms are not directly responsible for these contents and the damages they cause, they cannot refuse to assume a social responsibility because of the tools they develop, which they make available to their users and which generate significant revenues; Respect Zone therefore suggests that platforms should be required to comply with several obligations in order to fight against this type of content and limit the damage they cause -first of all, platforms should set up training courses adapted to their audience, particularly according to their age. It would be useful to provide that, before accessing certain services, Internet users undergo a short training course. It would also make sense for platforms to require the authors of statements that have been the subject of notifications and content withdrawal to follow appropriate training as a condition for access to 26 the service. -secondly, the platforms should provide a single reporting button giving access to the possibility of notification but also to a reminder of the applicable rules and to the NGOs offering assistance – In order to ensure the appropriate legal management of such illicit harmful content, Respect Zone considers that, given their social responsibility, platforms must participate in the financing of the fight against this content. It therefore proposes that platforms be required to devote part of their income to finance approved NGOs offering legal and psychological assistance to victims. The platforms could choose which independent associations they would fund, but the level of their obligations would be set by law, according to their activity and the income generated in each State. The platforms should appoint a mediator to ensure that the interventions of accredited NGOs are taken into account and to monitor the measures implemented to stop harmful actions. Even if the content is not manifestly illegal, their authors should be informed of the harm it causes. -Finally, Respect Zone claims that platforms should be required to report regularly, in principle annually, on the measures taken to ensure the training of users, the removal of harmful content and to analyze the results of these actions.

CHILD-FRIENDLY INFORMATION: Children and teenagers are the most at risk with illegal contents. They are also those who might play the most with the rules by trying to test, to bend them. Online or offline, authority cannot be forced upon them but accepted, understood. Thus, platforms should inform on their conditions of services with wording and media adapted to each category of age.

CHILD-FRIENDLY TUTORIALS: Beside their duty to prevent and fight against illegal contents harbored on their spaces, platforms must be bound to constantly inform their minors users. Platforms should offer clear and adapted contents raising awareness on the risk of cyberviolences, the effects it may have on the wellbeing and the resources existing at the benefits of the users on the platform as well as off the platform.

Respect Zone stands to advocate democratic values, civil liberties, human rights and the Rule of Law. Crisis and threats to our society cannot be a pretext to State of exception. The late pandemic, as all other crisis, is only revealing the limits of the current legal framework. They must be corrected within the Rule of Law. All special measures would only weaken the state of our democracies. Furthermore, the issues faced during the late crisis (disinformation, rise of hate speech, concerns about the monopolistic positions of some digital services’ corporations) have been noticed for some years. In reaction, political authorities seem determined to restore their status as effective regulatory bodies. Germany adopted in 2018 the NetzDG against disinformation. France followed in 2020 with law against hate speech and 28 disinformation online. In the US, committees and sub-committees of Congress are discussing the practices and the economics of the sector and its main actors. Thus, means of regulation and cooperation must be designed and implemented for constant activity and not only for potential special cases. 1. DRAFTING A EUROPEAN SCHEME OF HIGH-SPEED JUDICIAL SYSTEM: Our institutions must adapt to the new rhythm of the digital age. 2. FOUNDING A EUROPEAN INDEPENDENT REGULATORY BODY FOR DIGITAL COMMUNICATIONS AND TECHNOLOGIES: Absorbing the current European Union Agency for Cybersecurity (ENISA), the agency should be authorized to monitor, control, investigate and sanction digital services. 3. A EUROPEAN OBSERVATORY FOR DIGITAL HUMAN RIGHTS – A FORUM FOR ALL THE STAKEHOLDERS: The Agency should host an Observatory made up of representatives of civil society, research, culture and digital services from the model offered by the French “CSA” and its Observatory. 4. A EUROPEAN JUDICIAL CYBER-DELINQUENCY REGISTER: see question 2.5

Respect Zone contributes to make digital spaces places safety zones, allowing freedom of expression and respectful behavior. Respect for others and criticism are a guarantee for the freedom of creation and the protection of pluralism. Given the importance of digital services as a media in the digital public space, they must also conform to a certain standard of respect towards users. Therefore, Respect Zone is campaigning for a standardized EU short and explicit charter to be added to the numerous Terms of Service, hard to understand for users, detailing moderation methods, from detection to deletion, the means of recourse open to users and the support resources open to users exposed to cyberviolence. This charter must be consented by each new user. The Terms of Service and the standardized respect Charter should also be adapted in their presentation and wording so that they are understandable to different age groups. In addition, platforms should use nudges on their interface recalling the provisions of the ToS and the Charter. In the event of a dispute with a user, the platform must motivate its decisions and respect at all times the principle of contradictory procedure.

Articles 12, 13 and 14 of the Directive were adopted more than 20 years ago at a time when social networks were just emerging. The definitions given to the activities carried out have not been changed between « mere conduit », « caching services » and « hosting services ». The exclusion of liability for services providing storage of information provided by the recipients of the service has led Internet players to favor this model, to avoid any liability for the content they made available. The clarification in Article 14 that this exclusion of liability is conditional on the provider not being aware of the illegal activity or information clearly had negative consequences. In order to avoid liability, the platforms 32 considered that they had to organise themselves in such a way that they could argue that they had no knowledge of the content they were storing and thus rule out any moderation of that content. Faced with the proliferation of illegal and hateful content on the Internet, the platforms have had to put in place mechanisms to remove very quickly the content of which they have been informed of the illegal nature, but these a posteriori interventions are not an effective response against the dissemination of such content. Case law has not helped in the reasonable application of these principles by qualifying as mere hosters the platforms that design applications and make content available; these services have been qualified as « consisting of the storage of information provided by a recipient of the service ». Obviously, most of these platforms do not simply store information provided by their customers. Above all, they offer users the provision of services that allow them to access selected content and generate revenue from this provision. While the exclusion of liability might be understandable for services offering a simple storage service, it is not understandable for those who develop a business model based on the provision of content. The Directive does not take into account the difference between a provider who provides a storage service in return for remuneration and one who bases his income on the provision of content, the storage and selection of which he provides free of charge. While it is understandable that technical service providers are subject to limited liability, insofar as they do not have to be aware of the content they store or transmit and take withdrawal measures as soon as they become aware of it, this is obviously not the case for platforms that make content available to the public. For hosting services, the liability exemption for third parties’ content or activities is conditioned by a knowledge standard (i.e. when they get ‘actual knowledge’ of the illegal activities, they must ‘act expeditiously’ to remove it, otherwise they could be found liable). The implementation of measures to combat the dissemination of hateful or unlawful material should in no way be considered as a factor leading to the platforms’ liability. On the contrary, the implementation of measures such as training, moderation and assistance to victims should be taken into account to reduce the responsibility of intermediaries and help them to take an active role in combating cyber violences.

RESPECT ZONE advocates that it is necessary to define precisely the obligations of all types of services according to their purpose and size, to make compliance with these obligations subject to monitoring by an independent authority and to allow this independent authority to impose administrative sanctions against companies that fail to implement the measures to which they are bound.

RESPECT ZONE argues that internet services must take a range of measures to ensure the fight against the dissemination of illegal content while at the same time ensuring freedom of information. This starts by educating users to be aware of the responsibility they incur, particularly by making hateful statements that harm a fragile population. They must also put in place measures to effectively combat such content, by providing access to simple notification processes, ensuring the rapid removal of illegal content and making it possible to hold those who disseminate such content accountable. These measures should be subject to regular reporting.

RESPECT ZONE advocates that services that make content available on the Internet ought to offer the necessary means to ensure that such content respects the rights of users and third parties in terms of online safety vis a vis any cyberviolence risk. These services should be required to devote a budget proportionate to their income to the development and financing of these means and designate a person responsible for ensuring compliance with these principles. While the most important services should be required to deliver results, any service developing the availability of content on the Internet should be subject to a minimum of obligations. The role of anti cyber violence NGOs and experts must be recognised as independent and independency has a high value, both in advising platforms, training their moderators and in reporting illegal content.

RESPECT ZONE proposes that platforms should be required to contribute to including NGOs in their eco system, while guaranteeing their independence, allowing them to act as advisors or as auditors in order to perfect their compliance programs.

Respect Zone is a French NGO tackling and deterring cyberviolences. The NGO works with public and private partners to raise awareness on these issues, train officers of the Court and support victims. We stand for a safer and more respectful internet based on our 50 proposals to remedy InternHate and our Charter for Online Respect (see links below). The DSA is a unique opportunity to design a new legal framework defining the highest standards for users’ safety and involving in policies’ implementation platforms’ representatives and civil society actors. Respect Zone advocates for an enhanced transparency on Terms of services especially regarding protection of human dignity and child safety rules pertaining to online moderation, essential to restore trust and respect between users and between services and customers. We highlight that an efficient preventive approach consists in adopting a “respect by design” approach. With this approach, Respect Zone stresses the need for implementing a uniform signaling “Respect me” type button for all online services connected with EU based users standardizing a common preventive and protective process deemed to access basic information about user rights and helping out victims and here to help flagging alleged illicit contents. It also appears as necessary to fund a European independent body involving NGO specialized in the matter to audit, regulate and monitor digital services’ activities against hate speech and other unlawful or harmful contents. Furthermore, Respect invites the European Institutions to take into consideration the challenges raised by the rise of semi-private digital spaces as main places for hate dissemination. Respect Zone’s role is to help develop these key values of pluralism, free speech with the defense of the rights and fundamental liberties that one should enjoy in his digital life free from hate, prejudice, fake news and bullying.

![[DSA, AI Act] Régulation, et si l’Europe avait raison ? Podcast les Eclaireurs du Numérique avec Jean-Marie Cavada](https://idfrights.org/wp-content/uploads/2023/12/8c234391-01e8-40d4-9608-4ea6c80d4f11-440x264.jpg)